Here’s a comparison of these models with the same prompt and seed. Triggering keyword: mdjrny-v4 style Model comparison It has a different aesthetic and is a good general-purpose model. Open Journey is a model fine-tuned with images generated by Mid Journey v4. One drawback (at least to me) is that it produces females with disproportional body shapes. It’s useful for casting celebrities to amine style, which can then be blended seamlessly with illustrative elements.

You can use danbooru tags (like 1girl, white hair) in the text prompt. Anything V3 Anything v3 model.Īnything V3 is a special-purpose model trained to produce high-quality anime-style images. Include wardrobe terms like “dress” and “jeans” in the prompt.įind more realistic photo-style models in this post.

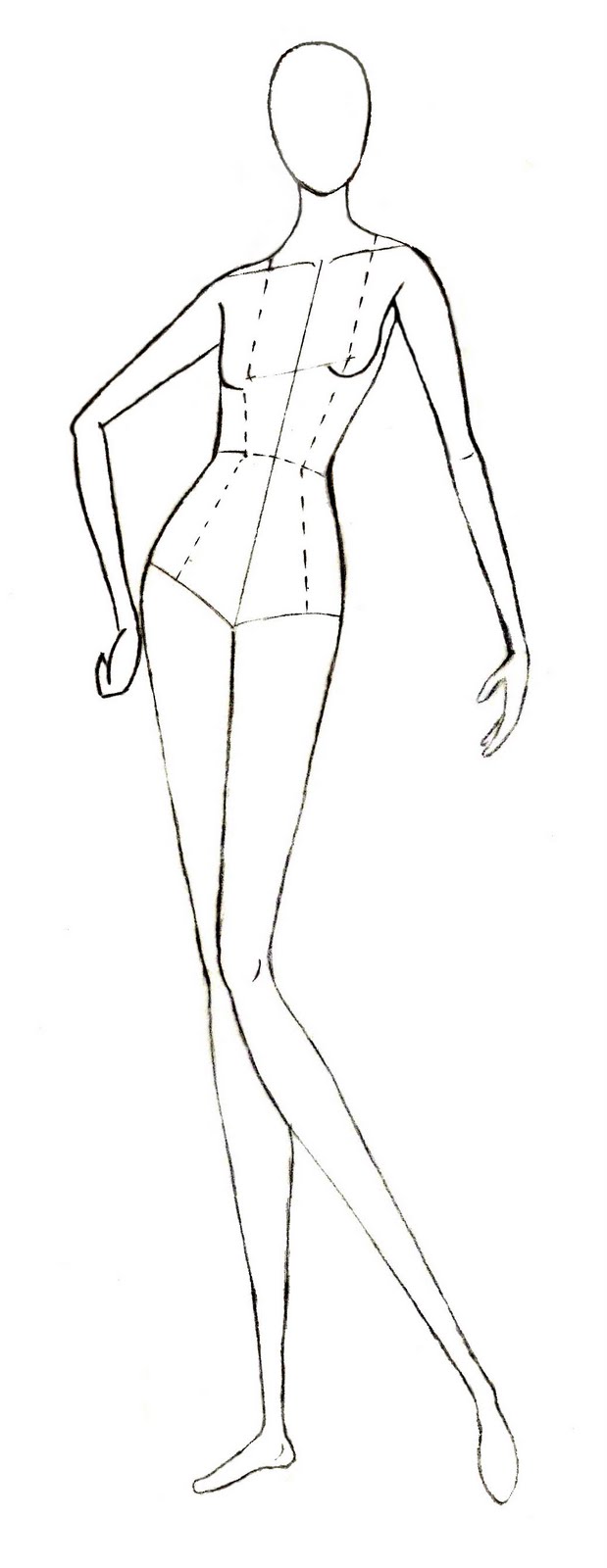

It has a high tendency to generate nudes. Interestingly, contrary to what you might think, it’s quite good at generating aesthetically pleasing clothing.į222 is good for portraits. F222 F222į222 is trained originally for generating nudes, but people found it helpful in generating beautiful female portraits with correct body part relations. In my experience, v1.5 is a fine choice as the initial model and can be used interchangeably with v1.4. Like v1.4, you can treat v1.5 as a general-purpose model. It produces slightly different results compared to v1.4 but it is unclear if they are better. The model page does not mention what the improvement is. The model is based on v1.2 with further training. V1.5 is released in Oct 2022 by Runway ML, a partner of Stability AI. Below is a list of models that can be used for general purposes. There are thousands of fine-tuned Stable Diffusion models. I will cover the v1 models in this section and the v2 models in the next section. There are two groups of models: v1 and v2. In layman’s terms, it’s like using existing words to describe a new concept. Only the text embedding network is fine-tuned while keeping the rest of the model unchanged. A new keyword is created specifically for the new object. The goal is similar to Dreambooth: Inject a custom subject into the model with only a few examples. There’s another less popular fine-tuning technique called textual inversion (sometimes called embedding). A model trained with Dreambooth requires a special keyword to condition the model. You can take a few pictures of yourself and use Dreambooth to put yourself into the model. It works with as few as 3-5 custom images. For example, you can train Stable Diffusion v1.5 with an additional dataset of vintage cars to bias the aesthetic of cars towards the sub-genre.ĭreambooth, initially developed by Google, is a technique to inject custom subjects into text-to-image models. They both start with a base model like Stable Diffusion v1.4 or v1.5.Īdditional training is achieved by training a base model with an additional dataset you are interested in. Two main fine-tuning methods are (1) Additional training and (2) Dreambooth. Instead of tinkering with the prompt, you can fine-tune the model with images of that sub-genre.

But it could be difficult to generate images of a sub-genre of anime. For example, it can and will generate anime-style images with the keyword “anime” in the prompt. Stable diffusion is great but is not good at everything. It takes a model that is trained on a wide dataset and trains a bit more on a narrow dataset.Ī fine-tuned model will be biased toward generating images similar to your dataset while maintaining the versatility of the original model. Fine-tuning is a common technique in machine learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed